基于大模型敏感数据泄露风险的量化评估方法改进

打开文本图片集

中图分类号:TP309 文献标志码:A 文章编号:2095-2945(2025)34-0123-05

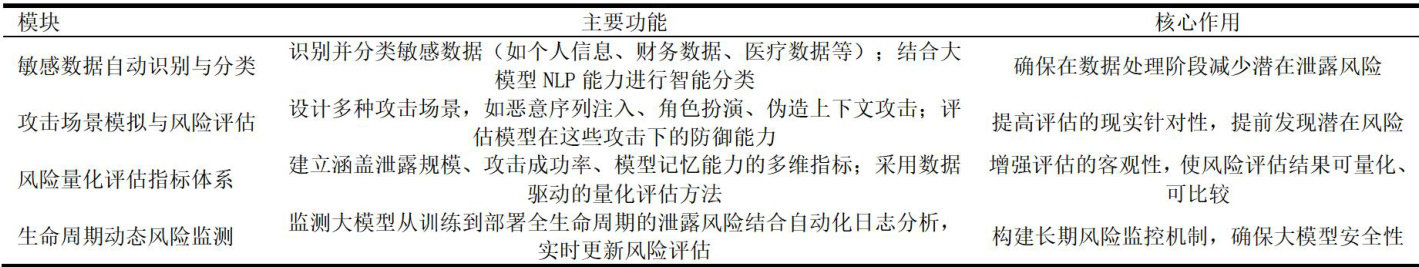

Abstract:WiththewidespreaduseofLarge Language Models(LLMs),theriskofsensitivedataleakagehasbecomean urgentproblem.Aimingattheshortcomingsof existingquantitativeaessmentmethodsforsensitivedataleakagerisks,thispaper proposesanimprovedasessment method.First,weanalyzetheprivacydisclosurerisksthatLLMmaycauseduring thetraining andreasoningphases,includingthedisclosureofsensitiveinformationintrainingdataandcueatacksduringthereasoning phase.Secondly,wesummarizethelimitationsofexistingprotectionmethods,suchastheshortcomingsofdatapreprocessing, diferentialprivacyandforgetingmethods.Onthisbasis,thispaperproposesanimprovedevaluationframework,including technicalmodulessuchassensitivedataidentificationandclassfication,iskscenariosimulation,andquantitativeevaluation indicatorconstructionByintroducingmulti-levelandmulti-dimensionalevaluationindicators,combinedwiththelatestprivacy protectiontechnologies,thispaperaimstoprovideamorecomprehensiveandobjectivequantitativeevaluationmethodforLLMs sensitive data leakage risks,thereby providing support for LLM's security application.

Keywords:largelanguagemodel;sensitivedatadisclosure;privacyprotection;quantitativeriskassessment;diferential privacy

近年来,大型语言模型如ChatGPT、GPT-4等在自然语言处理领域取得了显著进展,广泛应用于文本生成、机器翻译、对话系统等任务。(剩余6780字)