基于思维链蒸馏和反事实推理的农业命名实体识别技术

打开文本图片集

中图分类号:S126 文献标志码:A 文章编号:1001-411X(2026)01-0086-08

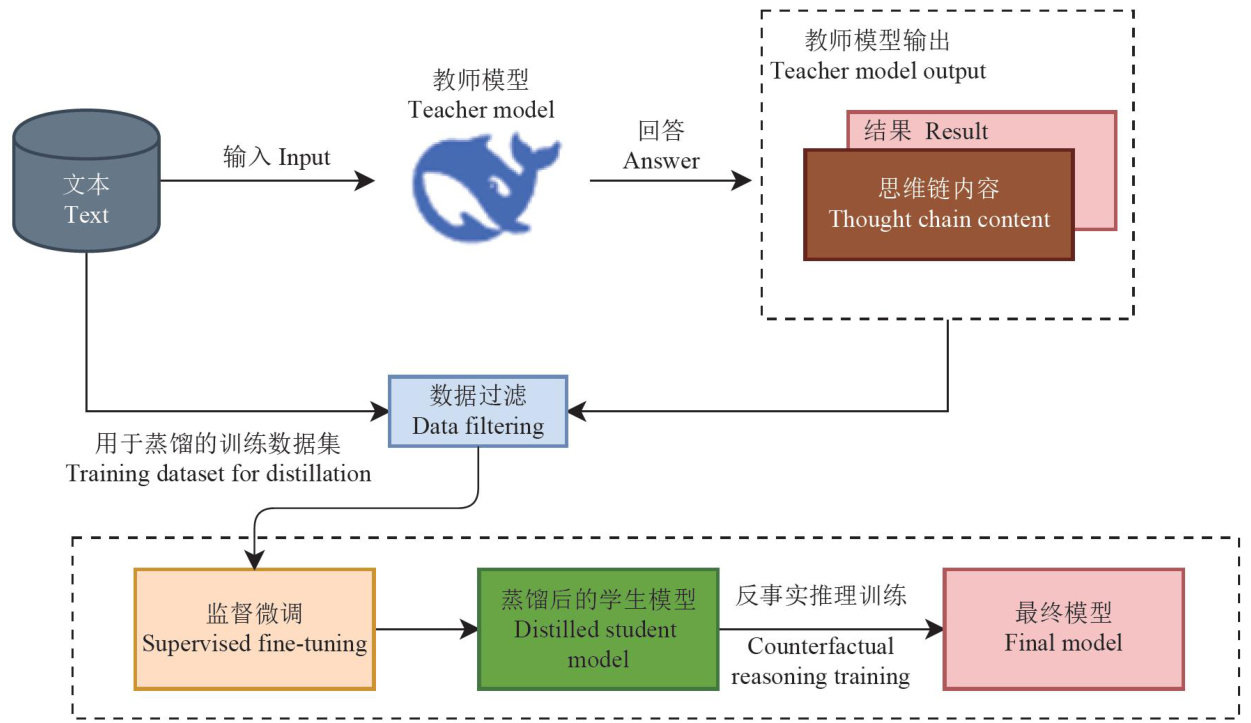

Abstract: 【Objective】Toaddress the issues of hallucinations,contextual logical inconsistencies,and inability torun on low-resource devices when large language models perform named entity recognition (NER) in agriculture. 【Method】 Using DeepSeek with 671 billion parameters (DeepSeek-671B) as the teacher model, domain knowledge was transferred to student models with fewer parameters. The student models selected were low-parameter versions of DeepSeek,Qwen, and Llama (1.5 billion,7.0 billion,and 14.0 billion parameters, abbreviated as 1.5B,7.0B and 14B respectively), which underwent distilation and counterfactual reasoning training.Model performance was experimentally validated on the CropDiseaseNer dataset, a specialized agricultural disease dataset. 【Result】By comparing the performance of a series of distilled student models, the results showed that DeepSeek-14B achieved an entity recognition F1 score of 89.60% while requiring only 2.08% of the parameters of the teacher model. Its performance significantly outperformed both the generalpurpose large model GPT-mini-14B ( F1 score: 57.64% ) and the domain-adapted model GLiNER( F1 score: 82.96% ) based on a general LLM. Further analysis revealed that the DeepSeek student model, sharing the same architecture, demonstrated superiority over models with diferent architectures in recognizing long-tail categories such as disease entities and pathogen genus names, owing to its parameter alignment advantage. 【Conclusion】This study validates the effectiveness of knowledge distillation in NER tasks within the agricultural domain, offering a novel solution for entity recognition technology in resource-constrained scenarios.

Key words: Agriculture; Large language model; Knowledge distillation; Named entity recognition

随着智慧农业的快速发展,农业领域正面临“信息爆炸”的挑战。(剩余13904字)