基于小规模异构语言模型一致性委员会的数据剪枝方法

打开文本图片集

中图分类号:TP391 文献标志码:A 文章编号:1001-3695(2026)01-013-0110-10

doi:10.19734/j. issn.1001-3695.2026.05.0139

Data pruning method based on consistency committee of small-scale heterogeneous language models

Wang Kaiwen,Wang Yunzhe†,Tan Wei,Fu Qiming,Lu You,Chen Jianping (ScholofElectronicandIformationEnginering,uzhou UniersityofScienceadTecholog,SuzhouJiangsu5oia)

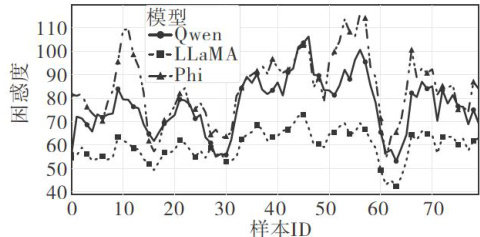

Abstract:Large language models(LLMs)fine-tuning performancestronglydependsonthequalityof trainingdata.Existing single-modelperplexity-baseddata evaluationmethodshadlimitations,including perplexitybias,wherelow-perplexitysamples couldstilleispreictedndossmodelirgceeredintodelsprucdinonistetppleiyoste samesamples.Toaddress these isues,this studyproposedamethodbasedontheconsistencyofaheterogeneous commiteeof smalllanguagemodels toevaluatedatavaluefromtwoperspectives.Themethodcalculatedthecoeficientofvariationofperplexityacrossmultiplemodelstomeasure modeldivergence.Italsocomputedpredictiondificultybycombining thesimilarity between predictedoutputsand reference answers.Basedonthese evaluations,the algorithm proposedthe MMCS(multi-model consistencyscore)metric toselect high-qualitytrainingdata.Experimentalresultsshowthatdata fiteredbyMMCSachieves beterfine-tuning performance than traditional methods ontwo mainstreamLLMsandthreepublicdatasets.Itobtainedoptimal resultsin27outof36comparativeexperiments.Thisfindingprovidesanewapproachforeficientdatapruning.The evaluation method based on multi-model divergence proves effective in improving the marginal utility of training data.

Keywords:large language model;data pruning;multi-model committee;perplexity

0 引言

随着大语言模型(LLMs)的迅猛发展,围绕其性能优化的竞争已日趋激烈。(剩余25719字)